How to empower your citizen developer for agile business intelligence

Continuous data integration for faster business insights is critical to support evolving BI requirements found in reporting and analytics

Introduction: Legacy BI systems

More than ever, organizations face constant pressure to do more with less, often looking to technology to operate more effectively and efficiently. Across functions, people seek the power to pick and choose the tools they need to get better, faster. And they’re not asking for permission from IT.

In a recent PMG survey of enterprises, 40% of survey respondents said that they were interested in cultivating citizen developers in their organizations; and 20% of organizations were actively using them. Just as significantly, 60% of all organizations reported that they were already actively using “low code” solutions in the development of their reports, which enables their end users to take on part of their IT application load.

Business intelligence (BI) tools have been major enablers of this shift to citizen developers within the ranks of company user departments—but legacy BI systems that have served organizations well over the last two decades are struggling to keep up with new requirements found in faster reporting, analytics, data science, user experience, new storage technologies, growing data sources, and more. The shift to “more BI” hasn’t come without its share of growing pains.

This is because BI in its own right can become an impediment when it comes to getting at all of the “right” data.

Overcoming a major BI constraint – data silos

In a very real sense, BI is a silo. A BI tool “works” when all of the data is stored in the tool’s own memory. To populate this BI memory with data, all of the requisite data from every outside BI source must be moved into the BI memory. Before any data can be moved, the data must be prepped, cleansed and normalized. If there are multiple BI solutions being used by different user groups, this process will repeat itself multiple times. Inevitably, there is major impact from data integration, and the business inherits a plethora of BI business silos that are distributed throughout the company that might not even contain consistent data. Inaccurate data produces inaccurate results, and inaccurate results can lead to erroneous business decisions and distrust of data.

While no- and low-code application development tools for end business users can facilitate some simple data integration, getting at all of the required data sources to perform a comprehensive BI query isn’t a task that is easily tackled by a citizen developer. Data integration—and the elimination of inconsistent data and BI data silos—is still often a job for IT.

But how can you accelerate BI and analytics for the business in an environment of hybrid computing that mixes and matches diverse data sources from internal systems, cloud-based platforms, edge IoT and outside services?

Facing the data integration challenge

Let’s start at the heart of BI data integration, which are essentially two fundamental data pipelines:

- Systems of record: systems with detailed and established rules;

- Systems of innovation: self-service systems that are set up by business users when they can’t wait for IT.

How data is sourced isn’t nearly as important to the user as much as its accuracy, timeliness and ability to be acted upon through the use of BI tools.

To facilitate these timely and actionable answers, IT must seamlessly integrate a myriad of data sources, so all of the “right” data can be brought together in a common data repository that users throughout the business can access when they perform their BI.

The key to achieving speed-to-value with BI

Fortunately, there is a way to move high quality data into your BI quickly so your organization can make high quality business decisions with agility and precision – these goals are not mutually exclusive.

You can do this in your data architecture by moving all data to a common data repository (or warehouse). By using a single repository for data, all users and applications accessing this data are working with the same high quality data. A common and uniform data repository also enables you to execute governance, data preparation, and maintenance policies around this central repository, and to do it only once.

Integrating and moving your data

The success of a well-maintained central data repository or warehouse that brings data in from diverse sources and that rapidly populates smaller caches of data for BI and other applications also depends upon a middleware platform that automates data integration, which would otherwise need to be laboriously hand-coded by IT.

HULFT Integrate is a modern data integration and ETL (extract, transform, load) tool that enables this.

Customers using HULFT Integrate access hundreds of data integration adapters that provide immediate access to a wide range of contemporary and legacy applications and data sources. These pre-defined adapters enable IT to focus on data transformation—not on writing custom code to connect to diverse data stores. HULFT adapters simplify integration by decoupling applications and databases from one another during the data integration process, whether that process is performed in real-time or batch.

HULFT Integrate rapidly extracts data from diverse, distributed sources; transforms the data through automated data manipulation, parsing and formatting rules that you predefine; and loads the data into staging databases. From there, data summarizations and analytical processes driven by your business rules can automatically populate the data warehouses and data marts that drive BI and other applications. This gives diverse sets of users data exactly as they want it, when they want it.

Because HULFT is technology agnostic, users get access to anything they want—whether they are accessing data through Web services, BI tools, or other applications.

Modern BI use cases with HULFT Integrate

Organizations across the industry spectrum are experiencing success with the dual combination of uniform data in a central data repository and HULFT Integrate, which imports data from a plurality of sources into the central repository, and then publishes this data out to BI applications.

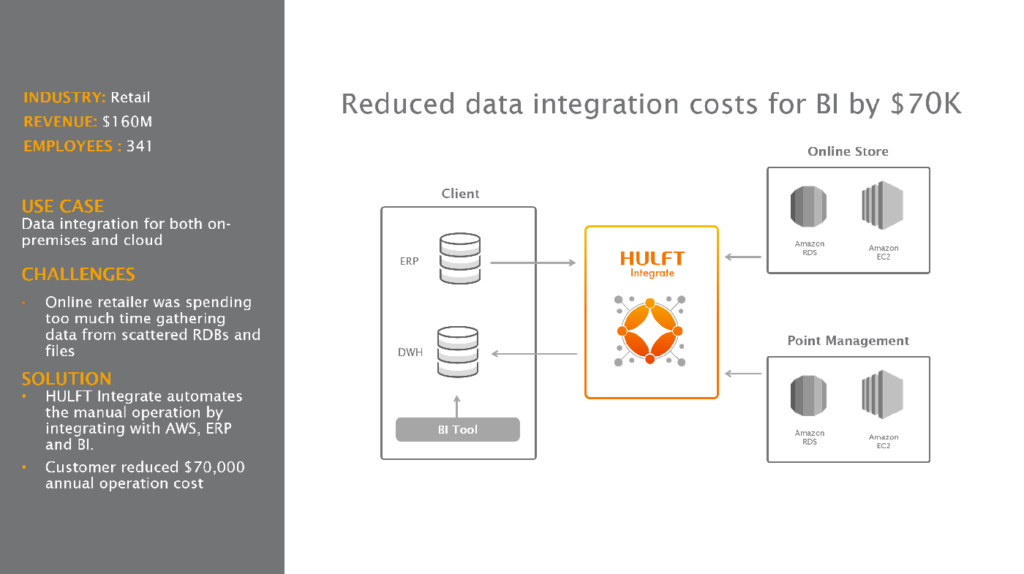

Retailer reduces annual data integration costs for BI by nearly $70K per year

A global retailer was losing time to insight from its reporting because it was struggling to move data from an assortment of files and relational databases into BI tools. As more of the company’s applications and data were moved to the cloud, it was also difficult to combine data from cloud services like AWS with data from internal systems like ERP.

By using HULFT Integrate to automate data movements between AWS, ERP and BI, the customer succeeded in eliminating the bottlenecks in getting data from sources and moving it to target applications. It reduced its operational costs by nearly $70K per year.

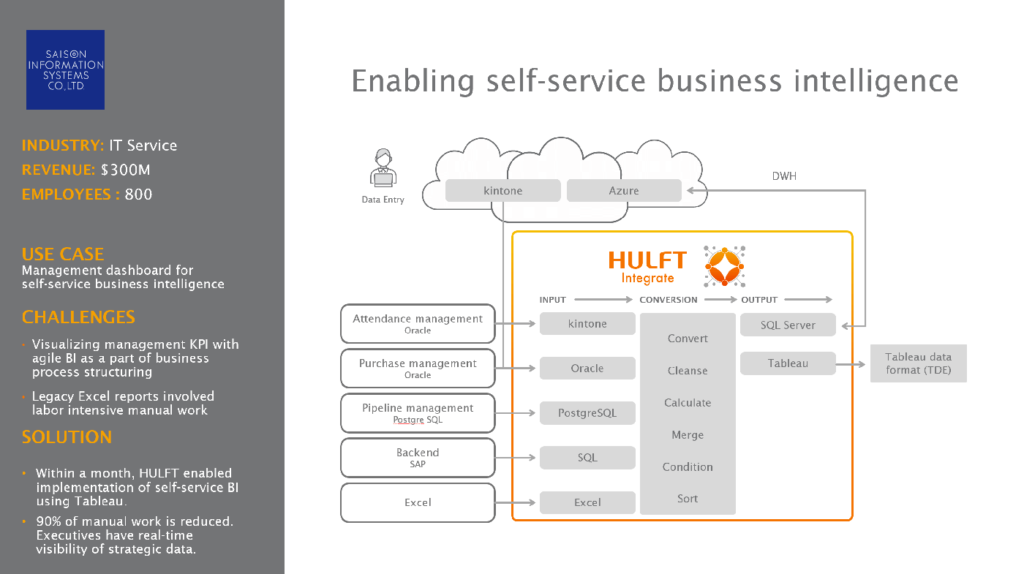

Global conglomerate creates self-service BI for all business users

Saison Information Systems (HULFT’s parent company) wanted to create a self-service BI platform for users in business departments throughout the company so they could monitor performance against their respective KPIs (key performance indicators). To do this, the company had to import data from a host of systems that ranged from Oracle attendance management and purchase management, to PostgreSQL pipeline management, Excel spreadsheets and a backend SAP ERP systems.

Integrating all of these systems was tedious and time-consuming. IT lacked agility and couldn’t match the speed of business.

The initial concept was to customize Excel spreadsheets to meet these needs, but the amount of labor would that would be required rendered goals such as business agility and rapid times to insight unworkable for the team.

Saison Information Systems solved the problem by using HULFT Integrate to convert, cleanse, calculate, merge and condition incoming data from all of its source systems. HULFT Integrate then exported this data to a cloud-based Azure SQL server that served as a single data repository for a self-service Tableau BI platform. The project took just one month to complete. It gave real-time visibility of company performance to executives across the enterprise, and reduced manual work by 90%.

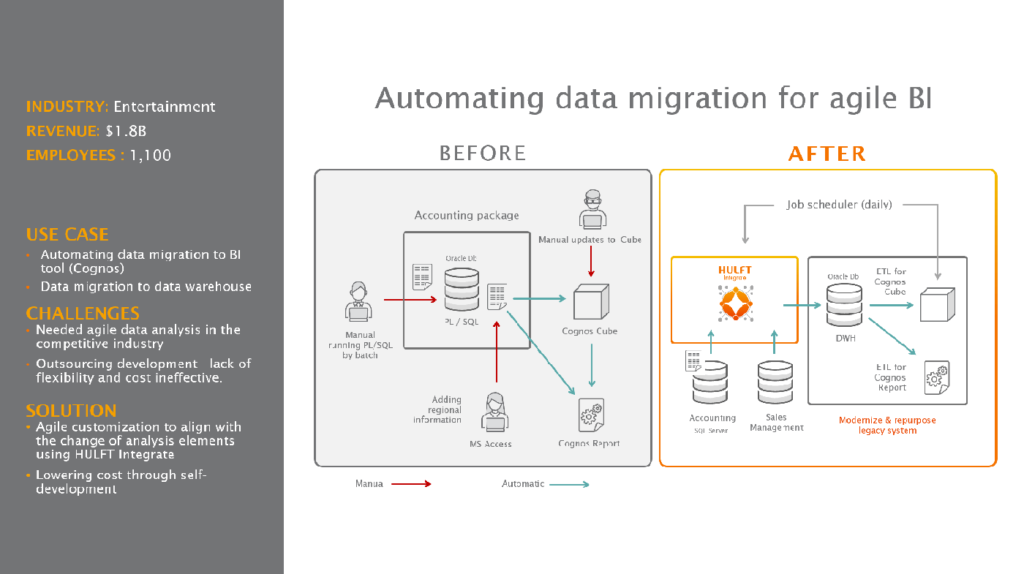

Entertainment company reduces costs and simplifies data import and export

An entertainment company wanted a way to keep pace with rapidly changing business demands and data. It also wanted to preserve its legacy system investments, and to use its own staff in managing and transforming data.

Goals were to avoid project outsourcing, and to automate the movement of data from disparate systems to a single data warehouse that Cognos BI tools could access for batch and on demand queries about business conditions.

The customer used HULFT Integrate to extract, transform and load data from its accounting and sales systems into its Oracle enterprise system, which became the data warehouse. It then moved data from the Oracle warehouse to Cognos BI reporting tools and also to Cognos Dynamic Cubes, which enabled high-performance interactive analysis of data.

The project eliminated the need for manually supported batch Oracle PL/SQL queries, and the manual support of Microsoft Access data importations so access to regional sales performance could be gained. Automating data movements into a central warehouse also streamlined data extracts to Cognos BI tools. Just as significantly, the customer found a way to preserve its legacy systems and to self-determine its own data agility with its own staff.

The bottom line

Today’s data-driven decisions must match the speed of business. This is why companies are embracing citizen developers who can adroitly advance business intelligence by using agile, self-service BI tools.

However, you can’t enable agile, self-service BI tools and get ahead of your application development curve without an automated data integration, transformation and loading tool. High quality data driven by best-of-breed data integration tools can integrate, transform and load data from and to different sources at the speed of business. It’s what HULFT Integrate is built to do.

If you’re interested in a free consultation on how to meet compliance, please contact us at info@saison-technology-intl.com